This course "Web Applications with Large Language Model Fast Inference" is designed for individuals eager to delve into deep learning model training and deployment using Python, C/C++, and JavaScript. You’ll master Docker, TensorFlow, PyTorch, and Keras models, optimizing them for deployment across various sectors. Learn to deploy quantized models onto web pages developed with React, JavaScript, and Flask. Also, integrate Reinforcement Learning with Large Language Models.

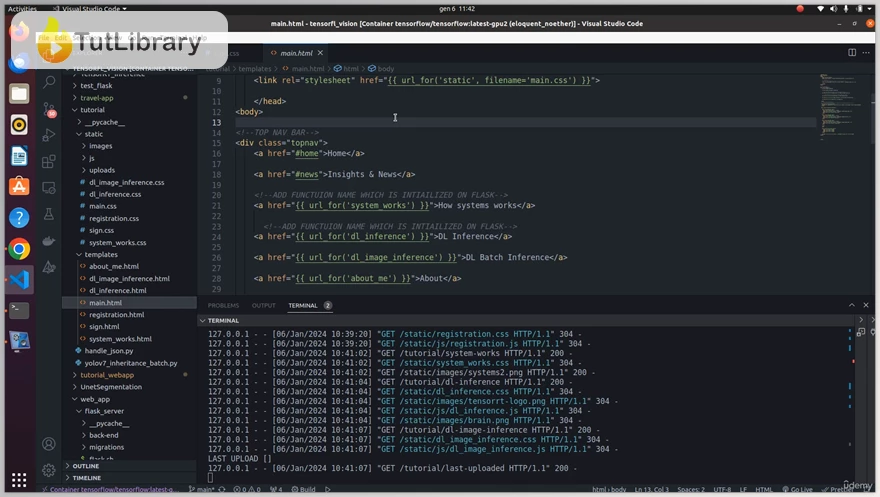

Gain proficiency in C/C++ programming, Docker, and JavaScript web development. Web Applications with Large Language Model Fast Inference covers React, JavaScript, HTML, CSS, Bootstrap, and Transformer-based Natural Language Processing. Plus, learn Python Flask Rest API and MySQL, DockerFiles, Docker Compose, and Docker Compose Debug files. Also, configure plugin packages in Visual Studio Code.

Learn to convert prebuilt models to ONNX, perform ONNX inference on images with C++, and then convert the ONNX model to TensorRT engine with C++ runtime and compile-time API. You'll explore TensorRT engine inference on images and videos, comparing metrics and results between TensorRT and ONNX inference. Lastly, prepare for C++ Object-Oriented Programming Inference and deployment on Edge Devices and Cloud Areas.

Web Applications with Large Language Model Fast Inference Table of Contents:

- Introduction to Docker and its usage

- Advanced Docker Usage

- Understanding OpenCL and OpenGL and their applications

- (LAB) Installation and Configuration of TensorFlow and PyTorch with Docker

- (LAB) DockerFile, Docker Compile, and Docker Compose Debug file configuration

- (LAB) Comparison of Different YOLO versions and their suitability for different problems

- (LAB) Utilizing Jupyter Notebook Editor and Visual Studio for Coding Skills

- (LAB) Full-stack and C++ coding exercises preparation

- (LAB) TENSORRT PRECISION FLOAT 32/16 Model Quantization

- Key Differences: Explicit vs. Implicit Batch Size

- (LAB) TENSORRT PRECISION INT8 Model Quantization

- (LAB) Visual Studio Code Setup and Docker Debugger with VS and GDB Debugger

- (LAB) Understanding ONNX framework in C++ and its application to custom problems

- (LAB) Understanding the TensorRT Framework and its application to custom problems

- (LAB) Solving Custom Detection, Classification, and Segmentation problems and inference on images and videos

- (LAB) Basic Object-Oriented Programming in C++

- (LAB) Advanced Object-Oriented Programming in C++

- (LAB) Deep Learning Problem-Solving Skills on Edge Devices and Cloud Computing with C++ Programming Language

- (LAB) Generating High-Performance Inference Models on Embedded Devices to achieve high precision, FPS detection, and less GPU memory consumption

- (LAB) Visual Studio Code with Docker

- (LAB) GDB Debugger with SonarLite and SonarCube Debuggers

- (LAB) YOLOv4 ONNX inference with OpenCV C++ DNN libraries

- (LAB) YOLOv5 ONNX inference with OpenCV C++ DNN libraries

- (LAB) YOLOv5 ONNX inference with Dynamic C++ TensorRT Libraries

- (LAB) C++ (11/14/17) Compiler Programming Exercises

- Key Differences: OpenCV AND CUDA/ OpenCV AND TensorRT

- (LAB) Deep Dive into React Development with Axios Front-End Rest API

- (LAB) Deep Dive into Flask Rest API with REACT with MySQL

- (LAB) Deep Dive into Text Summarization Inference on Web App

- (LAB) Deep Dive into BERT (LLM) Fine-tuning and Emotion Analysis on Web App

- (LAB) Deep Dive into Distributed GPU Programming with Natural Language Processing (Large Language Models)

- (LAB) Deep Dive into Generative AI use cases, project lifecycle, and model pre-training

- (LAB) Fine-tuning and evaluating large language models

- (LAB) Reinforcement learning and LLM-powered applications, ALIGN Fine-tuning with User Feedback

- (LAB) Quantization of Large Language Models with Modern NVIDIA GPUs

- (LAB) C++ OOP TensorRT Quantization and Fast Inference

- (LAB) Deep Dive into Hugging Face Library

- (LAB) Translation ● Text Summarization ● Question Answering

- (LAB) Sequence-to-sequence models, ONLY Encoder-Based Models, Only Decoder-Based Models

- (LAB) Defining the terms Generative AI, large language models, prompt, and describing the transformer architecture that powers LLMs

- (LAB) Discussing computational challenges during model pre-training and determining how to efficiently reduce the memory footprint

- (LAB) Describing how fine-tuning with instructions using prompt datasets can improve performance on one or more tasks

- (LAB) Explaining how PEFT decreases computational cost and overcomes catastrophic forgetting

- (LAB) Describing how RLHF uses human feedback to improve the performance and alignment of large language models

- (LAB) Discussing the challenges that LLMs face with knowledge cut-offs, and explaining how information retrieval and augmentation techniques can overcome these challenges

Who is this course for?

- University students

- Recent graduates

- Professionals seeking to deploy Deep Learning Models on Edge Devices

- Enthusiastic AI learners

- Embedded software engineers

- Developers specializing in natural language processing

- Engineers experienced in machine learning and deep learning

- Full-stack developers proficient in both JavaScript and Python

Click on the links below to Download Web Applications with Large Language Model Fast Inference!

You are replying to :